In the quest to modernize IT infrastructure, an increasing number of users are transitioning to containerized applications, with Kubernetes emerging as the preferred choice for container orchestration. However, as most applications have traditionally been run in a virtualized environment (or on hyperconverged infrastructure), many users find themselves facing a dilemma: should they maintain their existing IT systems, or should they replace them with bare-metal servers?

In this article, we will delve into this issue by comparing the operation of Kubernetes on virtual machines (VMs) and bare-metal servers. This will include an examination of IT architecture, as well as a comparison of Kubernetes capabilities on both platforms, covering aspects such as performance, availability, agility, security, cost, and more.

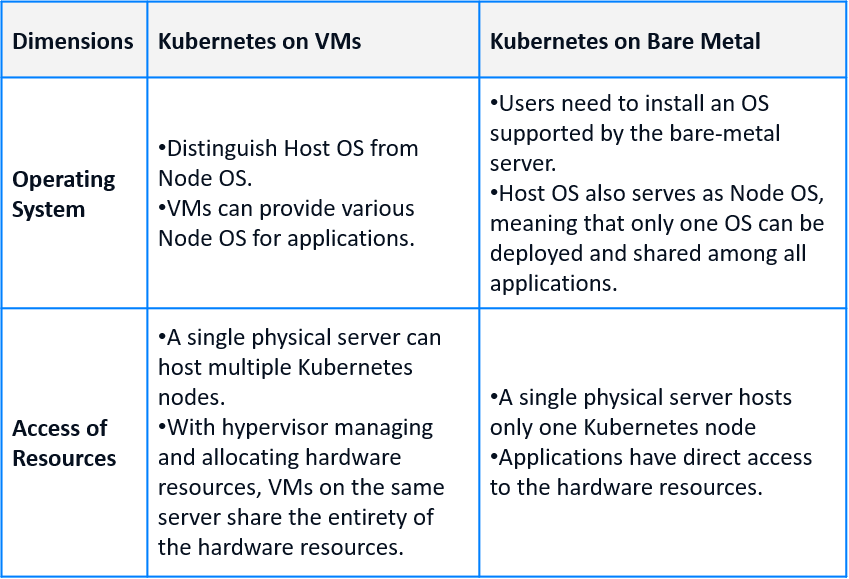

Comparison of Architecture

The primary difference between running Kubernetes on VMs and bare-metal servers lies in the virtualization layer. While VMs require a hypervisor, Kubernetes operates directly on the physical servers in a bare-metal deployment. This distinction significantly impacts how workloads share the operating system and hardware resources.

Differences in OS Layer

For Kubernetes on VMs, the host OS facilitates VM operations, while the Node OS, which supports the Kubernetes clusters, is installed directly on the VMs. This setup allows each VM within the same cluster to provide a unique Node OS for Kubernetes clusters and applications of multiple versions.

On the other hand, when deploying Kubernetes on bare metal, users need to install an OS that the server supports. This OS also functions as the Node OS, signifying that all workloads running on Kubernetes share the same OS installed on the bare-metal server.

Differences in Accessing Resources

In a virtualized infrastructure, hardware resources are managed and allocated by hypervisors. A physical server can support multiple VMs, each allocated a portion of the hardware resources, enabling dynamic resource requests and releases.

In contrast, bare-metal servers make hardware resources directly accessible and manageable by applications. Given that a single physical server only hosts one Kubernetes node, this node has exclusive access to all the hardware resources. Resource allocation in a Kubernetes cluster based on a bare-metal server is typically static, implying that hardware resources cannot be shared between clusters, potentially leading to resource waste.

Overall, compared to the VM environment, deploying Kubernetes on bare-metal servers entails fewer layers in the IT architecture. However, it is not accurate to assert that bare metal is more Kubernetes-friendly; the absence of a virtualization layer can be a double-edged sword when it comes to resource access. Conversely, VMs enhance Kubernetes’ compatibility with hardware devices and operating systems. Infrastructure and Operations (I&O) teams should carefully consider these factors when evaluating Kubernetes deployment for specific application scenarios during the initial stages.

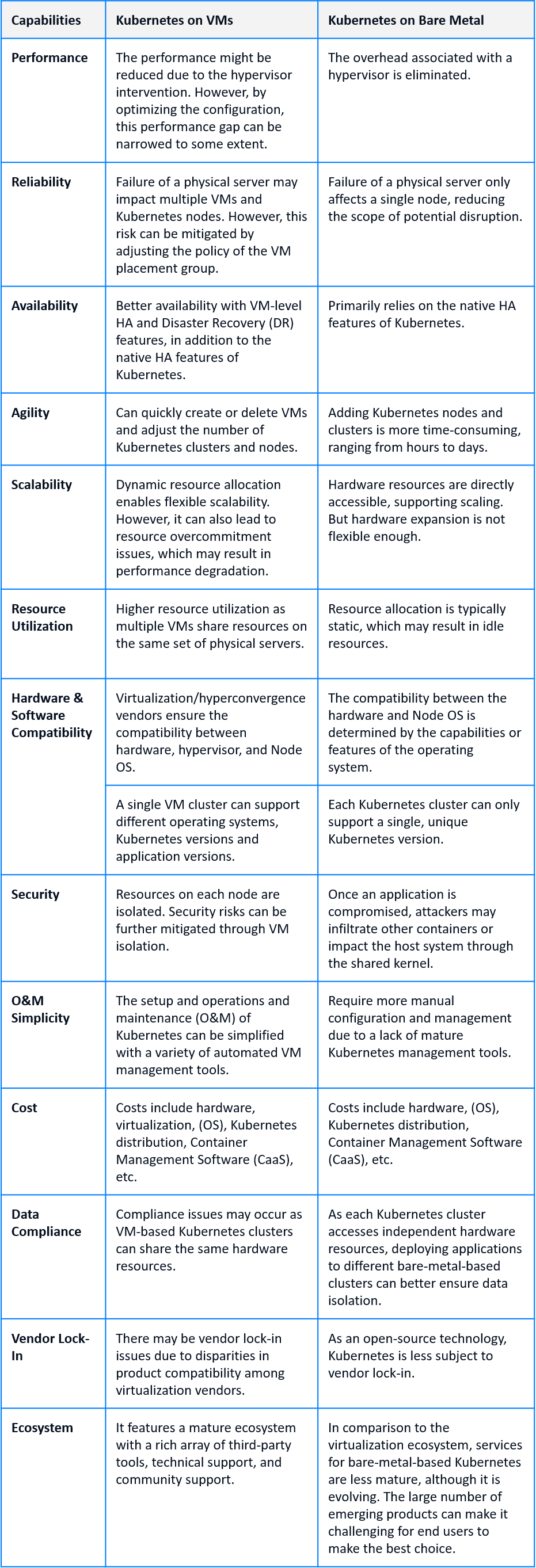

Comparison of Key Capabilities

Due to architectural differences, Kubernetes’ performance on VMs and bare metal varies in terms of key capabilities such as I/O performance, reliability, availability, agility, scalability, security, cost, etc. We will explore 13 of these capabilities in more depth and discuss them in the chart below.

Performance

Although bare metal eliminates the overhead associated with the hypervisor, it doesn’t necessarily mean that VMs are incapable of supporting performance-sensitive and large-data-volume applications. By allocating sufficient resources to VMs and correctly configuring CPU, memory, and I/O scheduling policies, the performance gap between Kubernetes on VMs and bare metal can be narrowed.

Reliability

In a VM-based Kubernetes cluster, where one physical server supports several VMs (possibly belonging to different Kubernetes clusters), a single server failure could impact all VMs on that server. This could potentially affect multiple Kubernetes clusters simultaneously. However, users can mitigate the single-point-of-failure problem by adjusting the VM placement group policy and separating VMs used by a single Kubernetes cluster across different physical servers.

On the other hand, since a bare-metal server only supports one Kubernetes node, the impact of server failure is less significant, given that Kubernetes clusters are correctly deployed.

Availability

Bare-metal servers generally rely on HA capabilities provided by Kubernetes itself to ensure availability. These capabilities include

- Automatic detection and restart or rescheduling of failed Pods.

- Dynamic scaling of Pod instances according to workload requirements.

- Built-in load balancing features can distribute data traffic to different Pod instances.

- Support for rolling updates and automatic rollbacks.

In contrast, VMs can leverage additional hypervisor HA features to further enhance the availability of Kubernetes clusters. This is on top of the capabilities provided by Kubernetes itself as mentioned above. These additional features include dynamic resource scheduling (DRS), proactive migration(vMotion), and automatic failure recovery (HA).

Agility

With virtualization, VMs can be quickly created and deleted. Users can also swiftly set different virtual network features for individual VMs. This enhances the agility of Kubernetes; tasks such as adding nodes to/removing nodes from the cluster, or increasing/decreasing the number of clusters can be completed in minutes.

In contrast, deploying Kubernetes nodes on bare metal generally takes longer. Adding nodes to an existing Kubernetes cluster usually takes hours, while creating a new Kubernetes cluster can take days. Once the deployment is complete, pods on bare metal achieve the same level of agility as those on VMs.

Scalability

Both VMs and bare metal can provide scalable infrastructure for Kubernetes. Deploying Kubernetes on VMs offers the advantage of flexible migration of nodes (VMs) to hosts that have sufficient hardware resources, such as CPU, memory, and disk space. Meanwhile, deploying Kubernetes on bare metal servers allows for straightforward resource requests and restrictions, load balancing, and resource quotas within a single Kubernetes cluster.

However, each approach comes with its own set of drawbacks. When deploying on VMs, resource sharing among different VMs can potentially lead to resource over-commitment. This requires a delicate balance between scalability and system performance that the users need to maintain.

When using bare metal servers, adjusting hardware resources or updating software versions is less flexible and often involves hands-on operations of physical devices. For instance, increasing the number of servers, expanding the amount of memory or disk space, or reinstalling the operating system are often manual tasks. Additionally, without the resource overcommitment feature, bare metal servers may have lower resource utilization efficiency compared to virtualization.

Security

Running Kubernetes on VMs enables resource isolation for each node, including the isolation of CPU, memory, disk, and network resources. The separation between VMs can help prevent potential attackers from easily accessing other nodes following a successful breach, adding an extra layer of security and aiding in protecting critical data and applications.

In a bare-metal environment, OS kernel functions such as Linux’s Cgroups and Namespaces are used to isolate applications. While containers share the same kernel, each has its own file system, network stack, and process namespace. This method of isolation is relatively weaker. If an application inside a container is compromised, the attacker might easily penetrate the container’s isolation layer, potentially affecting other containers or the host system. This risk can be mitigated by setting up security policies and leveraging enhanced isolation technologies such as SELinux and AppArmor, although these measures increase operational complexity.

Cost and Investment

Deploying Kubernetes on bare metal can be more cost-effective as it eliminates the expenses associated with virtualization. When only one dedicated Kubernetes cluster is needed, deploying Kubernetes on VMs may require higher investment as it involves the cost of virtualization software, management tools, and the time spent on learning these systems.

However, in scenarios where there is a need to run multiple K8s clusters concurrently, the total cost of VM-based Kubernetes clusters may not surpass that of a bare-metal solution. This is because cost savings can be achieved when a single virtualized cluster is used to host multiple Kubernetes clusters at the same time.

Furthermore, if there is a need to run both virtualized and containerized applications, deploying Kubernetes on VM can offer significant cost advantages by eliminating the need to build separate resource pools for different workloads.

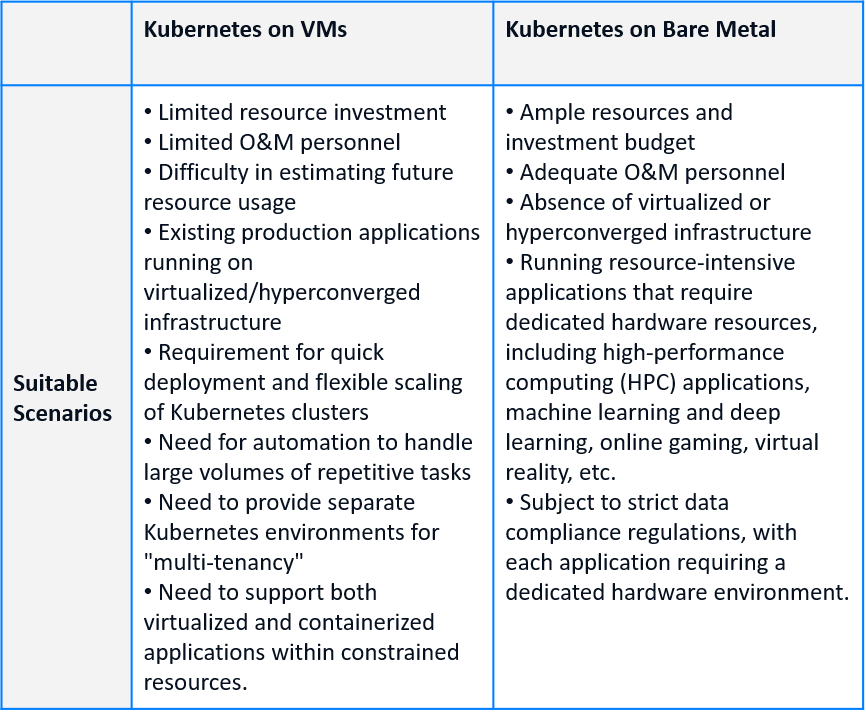

Final Thoughts: Decide Your Kubernetes Deployment Environment According to Enterprise Demands and Application Scenarios

In general, VM-based Kubernetes offers advantages such as improved resource integration and utilization, more flexible horizontal scalability, simpler and more efficient cluster lifecycle management, higher availability, and greater independence of kernel, storage, and network. Under certain circumstances, a bare-metal environment provides better performance and lower costs due to reduced virtualization overhead.

Cloud transformation is not an overnight task, and there is no definitive answer to the most suitable deployment environment for Kubernetes. Decisions on whether to utilize virtualization or hyperconvergence, switch to bare metal, or deploy in a hybrid environment should be made thoughtfully in line with the business goals and application scenarios.

Also noteworthy is that according to Gartner’s “Market Guide for Container Management” report, while some enterprises have started running containers on bare metal, the rate at which new enterprises are adopting this approach is slow. This is primarily due to the current state of operations and maintenance tools for bare-metal-based Kubernetes: although numerous, they are quite fragmented and struggle to form a comprehensive system solution that meets production environment needs. Instead, solutions that support both virtualization and containers, such as deploying VMs on the Kubernetes platform or integrating Kubernetes with virtualized environments, are becoming the mainstream choice.

Recently, SmartX officially released SKS 1.0, a service for building and managing production-level Kubernetes. By integrating commonly used Kubernetes plugins with SmartX HCI components (such as virtualization, distributed storage, network, and security), SKS allows enterprises to easily deploy and manage production-level Kubernetes clusters, thereby building a comprehensive enterprise cloud infrastructure capable of supporting both virtualized and containerized applications. SKS not only offers the advantages of virtualization in efficiency, scalability and security, but also ensures high performance for stateful applications through the SmartX distributed storage CSI plugin.

To learn more about SKS, please refer to the following resources:

- Web page: SMTX Kubernetes Service

- Blog: SmartX Releases SKS 1.0, Offering One-Stop Solution to Build Production-Ready Kubernetes Clusters

- Document: SMTX Kubernetes Service 1.0.0 User Guide

Reference:

1. Market Guide for Container Management, Gartner

https://www.gartner.com/document/4012524