Many users leverage load balancers (LB) to optimize data traffic distribution, thereby improving the availability of application systems and preventing performance bottlenecks on physical servers. However, in a virtualized environment, traditional hardware-based LBs face challenges in delivering simple, flexible, and agile support as the volume and dynamics of data traffic in virtual networks increase rapidly.

To address the dynamic nature of data traffic in virtualization, SmartX introduces Everoute 2.0, a network and security product with an upgraded software-defined network load balancing feature. This feature enhances application performance and availability, offering unified support for both virtual machines (VMs) and containers running on SmartX HCI (ELF) clusters.

In this article, we will delve into the LB feature of Everoute 2.0 and explore its various use cases.

What is “Software-defined” LB and Why Does it Matter?

With the maturation of container technologies, an increasing number of enterprises are adopting containerized applications to enhance their business agility. Simultaneously, data centers are transitioning from traditional, physical-server-centric environments to virtualized ones, where compute, storage, and network resources are software-defined.

These transformations have a profound impact on running workloads. In the virtualization/HCI environment, virtual networks play a dominant role, leading to more frequent communication between clients and virtual servers (VMs), as well as between VMs themselves. Consequently, the volume of data within the VM network experiences a significant surge. Additionally, due to the frequent changes in VMs and business services, the access paths within VM networks become increasingly complex and dynamic. These changes necessitate LBs to be simpler and more flexible in order to effectively handle the evolving demands.

Traditionally, enterprises have relied on LB appliances to balance data traffic in physical networks. However, this approach is not well-suited for the virtualized environment due to the reasons below.

- Complex design and implementation: Users need to configure LB both when data flows from the client to the server and for internal traffic between servers. Implementing this with LB appliances not only complicates the process but also entails a substantial amount of manual labor and resource investment from the administrators.

- Hard to change configuration and architecture: Once hardware LBs are deployed, it is not easy to modify fundamental topologies. Should there be a need to modify the network routing and architecture, such as for rapid development of new services or system changes, not only is coordination across multiple departments required, but physical operations are also necessary. This is time-consuming and labor-intensive, making it difficult to complete in a short period of time, and thus unable to meet the requirements for fast deployment of new services.

- Complex communication between VMs: If both the client and server operate within a virtualized environment, and LB appliances are still used, the data path must be routed from the virtual network through physical L2/L3 connections to the LB appliances, and then back to the target virtual network. This process is complex and inefficient.

In contrast, “software-defined” LBs, which are carried by VMs and rely on VMs for data distribution, are typically designed for virtualized/HCI/cloud environments to address the above challenges.

- Automatic deployment of virtualized LBs: Reduce manual operations.

- Configure based on virtual network topology and support one-arm or two-arm service modes: Allow flexible configuration of LBs without the need to change physical network architecture or configurations on appliances.

- Manage LB strategies on virtualization/HCI GUI: Reduce the complexity of policy management and the overall O&M burden.

Besides, according to Gartner’s report “Enterprise Network Equipment by Market Segment, Worldwide, 2Q23”, over 70% of Application Delivery Controller (ADC) products (which include products providing network load balancing services) were delivered in the form of software during the years 2022-2023. The adoption of software-defined LBs continues to grow, while LB appliance usage rarely increases or declines. This trend also reflects enterprises’ increasing recognition of the value and advantages of software-defined LBs.

Software-defined LB Provided in Everoute 2.0

Everoute provides software-defined network and security for both virtualized and containerized applications running on SmartX HCI, as well as unified management. While Everoute 1.0 features the distributed firewall function, in Everoute 2.0, software-defined network load balancer is added to serve VM and container instances on HCI clusters.

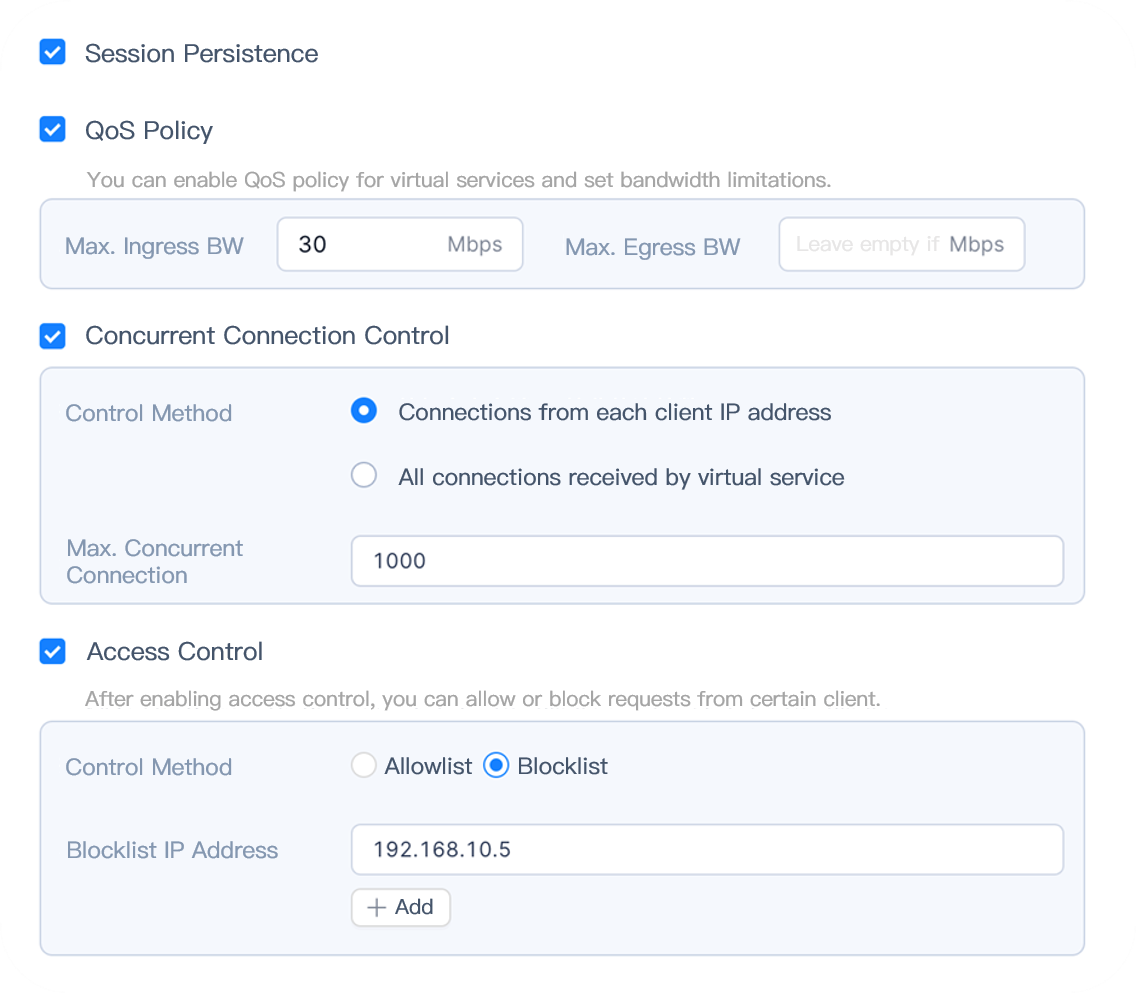

Everoute LB provides Layer 4 load-balancing services for applications running on VMs, containers, or physical servers. It can improve application performance and reliability by evenly distributing data traffic to multiple real servers based on predefined algorithms according to IP addresses and port information in data packages. Leveraging active-active and active-standby mechanisms, It also minimizes service downtime with smooth application instance failover, and protects applications and resources through access control and QoS (bandwidth and connection limitation) on instances.

As Everoute LB is deployed on SmartX’s native hypervisor – ELF, it is managed using a “software-defined” approach, allowing administrators to unifiedly configure and manage its functionalities and related components through SmartX’s centralized management platform – CloudTower. Everoute LB VMs can flexibly associate with different virtual networks in ELF environment and simplify the forwarding path of data traffic.

Key Features

Balancing application workloads

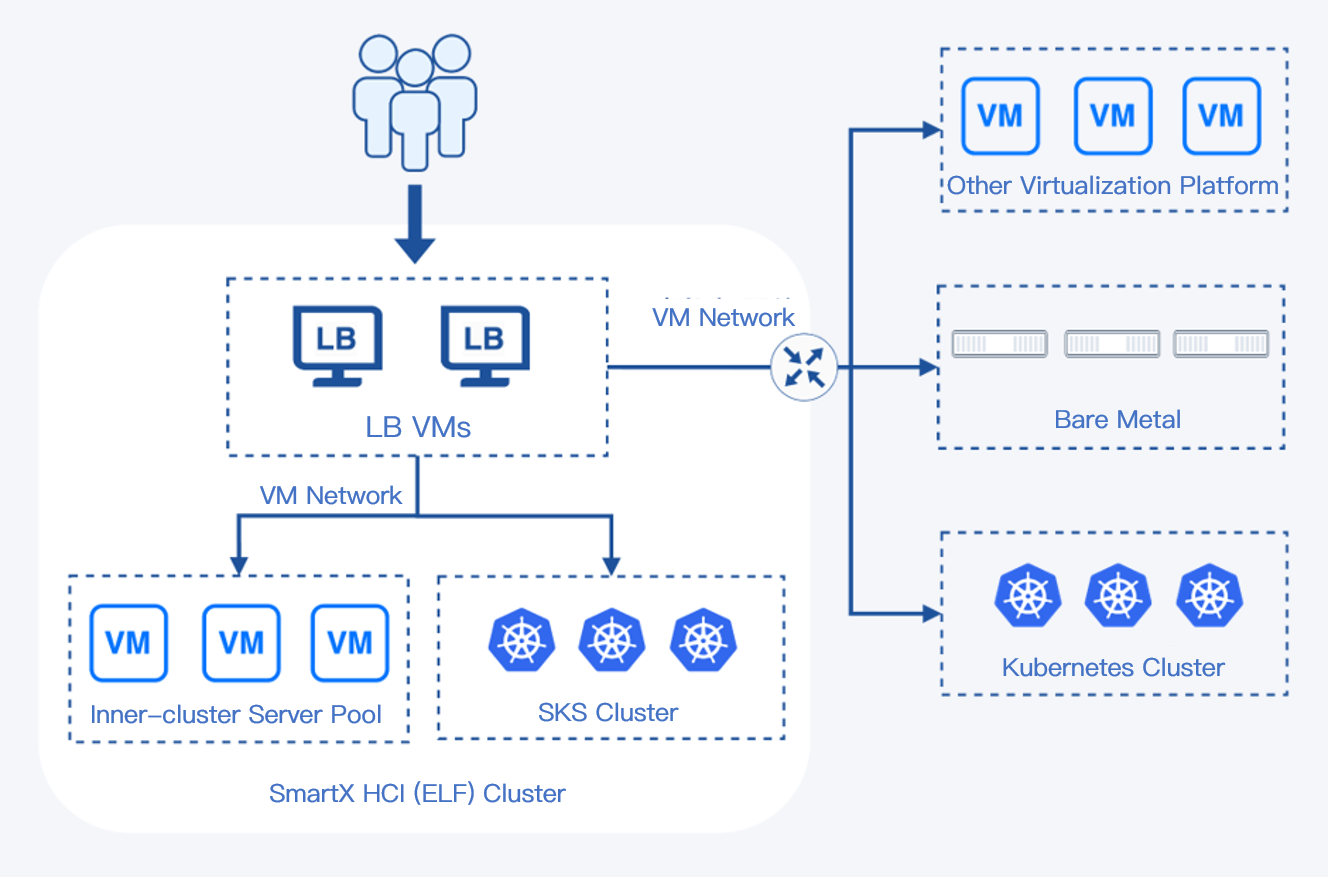

Everoute LB creates a virtual service for each supported application and associates the virtual service with the server pool of that application. It receives client requests on Virtual IP + Port and distributes them to real servers based on scheduling policies. This achieves traffic load balancing and enhances server resource utilization. Everoute LB can provide load-balancing services for real-end servers regardless of whether they are deployed as containerized servers, virtualized servers, or physical servers within or external to the SmartX ELF cluster.

Rich load balancing algorithms

Everoute LB provides a variety of load balancing algorithms to cater to the diverse demands of multiple application scenarios, including round-robin, weighted round-robin, least connections, weighted least connections, source IP address hash, and destination IP address hash.

Comprehensive and proactive health check

Everoute LB periodically performs proactive health checks on the backend servers via TCP, HTTP, UDP, ICMP protocols. It supports configuring multiple health monitors for the same group of real-end servers, enabling a thorough health assessment on server pools.

Active-active and active-standby mechanisms ensure high availability of virtual services

Each load-balancing instance includes Active and Standby roles deployed on different LB VMs. The virtual IP address is configured on the current Active role’s VM, and periodic heartbeat checks are performed using this IP. If the Active LB VM fails, the virtual IP address automatically migrates to the Standby role’s VM, ensuring virtual service availability. Different virtual services are distributed across multiple load-balancing instances to optimize resource utilization.

Application traffic control and concurrent connection management

Everoute LB allows for setting inbound and outbound traffic limits for virtual services, and regulating the number of concurrent connections between clients and virtual services at a time. This prevents any single virtual service or client from monopolizing excessive resources, ensuring a balanced resource allocation and mitigating the impact of DoS attacks on the system.

Values and Advantages

- Software-defined: Achieves network virtualization purely through software, with no extra need to purchase, deploy, or maintain dedicated hardware devices or adjust physical network configurations.

- Simple operations and maintenance: Integrates load balancing functions into the hyperconverged platform, enabling convenient management of both the infrastructure and load balancer on the CloudTower GUI.

- High availability & efficiency: Achieves high availability and efficiency through a combination of active-active and active-standby mechanisms, preventing single points of failure and improving service quality.

- Flexible adaptation: Provides load-balancing services for applications running in different locations and forms.

Everoute LB Application Scenarios

Balancing data traffic and resources across multiple application instances

Everoute LB is mainly responsible for network traffic distribution and ensuring that no single server or application instance is overloaded. This is crucial for applications that handle a large number of concurrent requests. By distributing data traffic across multiple servers or instances, Everoute LB enhances overall processing capacity and reduces response times. Additionally, it dynamically adjusts traffic distribution based on each instance’s existing load and resource utilization to further optimize performance.

High availability and rapid failover for applications

Everoute LB continuously monitors the health of real server and application instances. If an instance fails or experiences performance degradation, the load balancer quickly redirects traffic to other healthy instances, maintaining application availability. This rapid failover capability is critical for mission-critical business applications that need to run 24/7.

Applications’ coexistence and migration across heterogeneous IT infrastructure

Many users are migrating applications from physical servers to virtualization or Kubernetes. In this scenario, Everoute LB plays an important role as it enables the coexistence and smooth migration of applications between different environments.

For example, Everoute LB can simultaneously distribute data accessing one application to both physical servers and VMs, allowing them to run in parallel. After that, with a gradual adjustment of the weight of traffic distribution, all workloads can be migrated to VMs without service interruption. During this process, LB dynamically adapts traffic allocation based on the performance and health status of each instance, ensuring optimal service quality. This is applicable for both physical server-virtualization/container migration and the migration between different virtualization environments, for instance, migrating applications from vSphere VMs to other virtualization platforms.

More information on Everoute:

Website: https://www.smartx.com/global/everoute/

Everoute Whitepaper: https://www.smartx.com/global/resource/doc/everoute-whitepaper/

Everoute Demo: Distributed Firewall and Load Balancer

More information on SmartX HCI 5.1 & upgraded features:

Introducing SmartX HCI 5.1, Full Stack HCI for Both Virtualized and Containerized Apps in Production

GPU Passthrough & vGPU: Using GPU Application in Virtualization with SMTX OS 5.1

Improving Resource Utilization: Innovative Implementation of DRS in SmartX HCI

Network I/O Virtualization in SmartX HCI: Virtual NIC, PCI Pass-through and SR-IOV Pass-through

Preserving Data Integrity with Temporary Replica Strategy of SmartX HCI

Eliminate Virtual Network Blind Spots with SmartX Network Visualization

Reference

Market Share: Enterprise Network Equipment by Market Segment, Worldwide, 2Q23. Gartner.